Two administrative reminders:

- I'm running another set of my hands-on Claude Code workshops! If you've wanted to learn about AI-coding with Python and Pandas, then you should join me for a combination of problem solving and discussion. You can learn more at https://lernerpython.com/code-with-claude/, or watch the recording of an info session I held earlier this week, at https://www.youtube.com/watch?v=74k9Cr4epKY . I promise that the sessions will be great fun, and that we'll all learn a lot together. Questions? Just e-mail me at reuven@LernerPython.com.

- We'll have Pandas office hours on Wednesday of next week. If you're a paid BW subscriber, or a member of my LernerPython+data subscription platform, then come ask me anything you want about Pandas and data analysis in Python! I'll send Zoom info in the coming days.

This week, the US and Israel continued to attack Iran. Iran, for its part, struck not only at US military bases and at Israeli cities, but also at a wide variety of countries, including their neighbors in the Persian Gulf. They also shut down the Strait of Hormuz (as we discussed last week, https://www.bambooweekly.com/bamboo-weekly-160-strait-of-hormuz/), and hit oil production and storage locations in a number of countries. Not surprisingly, the price of oil is going up — and the Iranians are boasting that they'll push the price to $200/barrel, more than 3x the price it was at just last month.

Israeli cities have been on the receiving end of numerous Iranian missiles since February 28th. Every so often, we'll get a notice on our phone saying that we need to go to a shelter, For some, that means an actual shelter. For others, it means an underground parking garage or light-rail station.

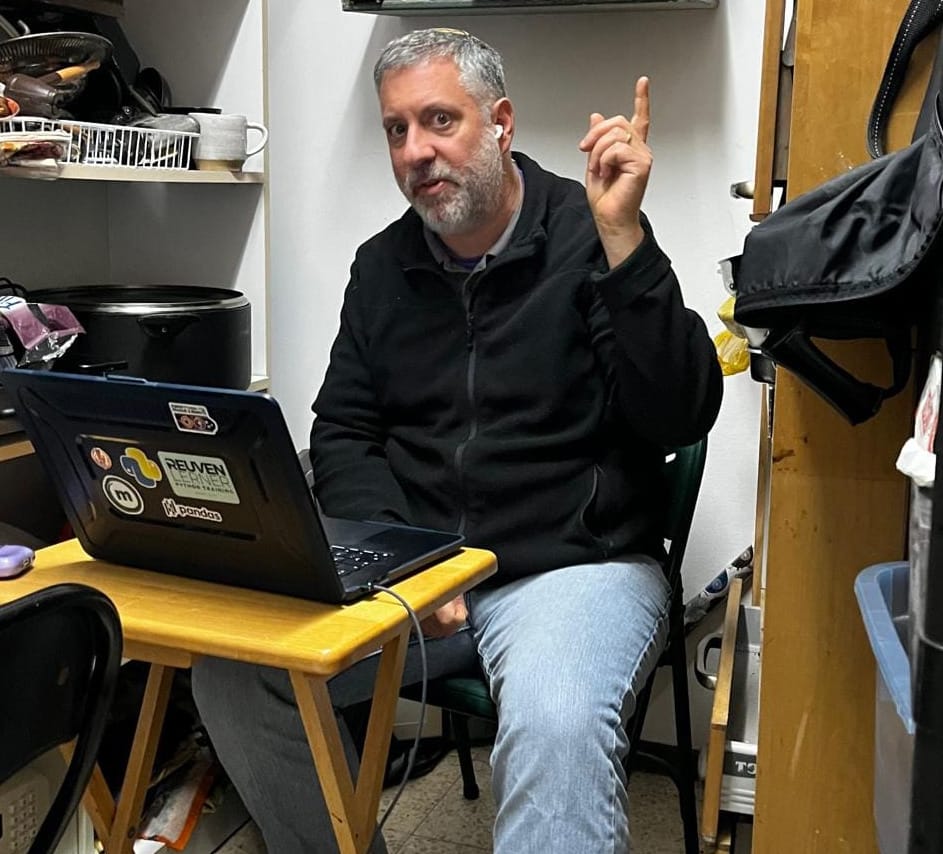

In my case, we have a thick-walled, reinforced "safe room" in our house; here's a picture that my wife took on second day of the war, when I conducted office hours from our safe room:

I really have to stress that while these alerts are exhausting and frustrating, and have forced us to put the contents of our storage room (aka our safe room) all over our laundry room and living room floor), we feel safe and protected there — even when we hear big booms.

It turns out that the notifications that we get on our phones are logged on a government Web site, and can be retrieved via an API. Developer Matan Ellhayani used this data to create https://canishower.com/, indicating how likely an alarm is to sound when you want to shower. The data is also stored in a GitHub repo maintained by Danny Leshem (https://dannyleshem.com/), at https://github.com/dleshem/israel-alerts-data.

I'll note that Israeli government data, including this data set, includes information for Israel and all the territories it controls, including those beyond the Green Line.

Data and five questions

This week's main data comes from https://github.com/dleshem/israel-alerts-data. But because location and alert data is in Hebrew, I have prepared a Python module containing two dictionaries you can use in translating the words into English. The file that I provided yesterday was actually missing a number of locations; I've updated it here:

Paid subscribers, both to Bamboo Weekly and to my LernerPython+data membership program (https://LernerPython.com) get all of the questions and answers, as well as downloadable data files, downloadable versions of my notebooks, one-click access to my notebooks, and invitations to monthly office hours.

Learning goals for this week include working with CSV files, date and time data, grouping, and plotting.

Here are the five questions I asked, along with my solutions and explanations:

Download the alert information from GitHub, keeping only the alertDate column (as datetime values), the data column (separating each location into a separate row, and removing the numbers), and category_desc. Use the dictionaries in the provided bw161_translations.py file to translate the location names and messages from Hebrew into English.

I started, as usual by loading Pandas as Plotly Express — as well as the bw161_translations module, which I placed in the same directory as my Marimo notebook:

import pandas as pd

from plotly import express as px

import bw161_translationsNext, I used read_csv not to read from a file, but to retrieve the latest and greatest version of the CSV file with Israeli government alerts. All of the read_* methods in Pandas can take a filename, file-like object, or a URL (as a string), and will know what to do with them:

url = 'https://github.com/dleshem/israel-alerts-data/raw/refs/heads/main/israel-alerts.csv'

df = (

pd

.read_csv(url)

)But wait, I actually asked to be a bit more selective than this:

- I only wanted some of the columns. I chose them with

usecols. - I wanted the

alertDatecolumn to be treated as adatetime, which required passing theparse_dateskeyword argument. - I wanted

alertDateto be the data frame's index, and thus passed theindex_colkeyword argument here, too.

The resulting call to read_csv looks like this:

df = (

pd

.read_csv(url,

usecols=['data', 'alertDate', 'category', 'category_desc'],

parse_dates=['alertDate'],

index_col = 'alertDate')

)But of course, that's not enough to do our analysis. One of the big sticking points with this data is the fact that each alert might be sent to more than one location. All of those receiving locations are put in the data column, separated by commas.

To fix that, we need to turn a row with "A, B" into two rows, one with "A" and one with "B", keeping all other values – including the index – identical to what was there before.

The first step is to break our comma-separated data into a list. In Python, we would do that with str.split. In Pandas, we do it with ... str.split, a method we can run on our string column. Here, I used assign to replace the original data column (containing strings) with new values (containing lists of strings):

df = (

pd

.read_csv(url,

usecols=['data', 'alertDate', 'category', 'category_desc'],

parse_dates=['alertDate'],

index_col = 'alertDate')

.assign(data = pd.col('data').str.split(','))

)Now what? We can use the explode method on our data frame to get one row for each list element. (I know, the method name is a bit too close to the subject matter for this week!) By invoking explode and mentioning data, we'll get back a much longer data frame than before, but one in which data contains one location (string) per row:

df = (

pd

.read_csv(url,

usecols=['data', 'alertDate', 'category', 'category_desc'],

parse_dates=['alertDate'],

index_col = 'alertDate')

.assign(data = pd.col('data').str.split(','))

.explode('data')

)We're making progress! But the strings are still messy. First, they contain numbers, which I assume are for some administrative purposes, but won't help us here. Second, the strings contain leading and trailing whitespace. That'll make it hard for us to perform a translation from Hebrew to English.

I thus ran assign a second time, replacing data with the result of (a) removing any digits, using a regular expression with str.replace, and (b) removing leading and trailing whitespace with str.strip.

Why couldn't we use str.replace or str.strip before? Why did we have to wait until after performing the str.split? Because only now do we have each place name on its own. Doing it earlier would have required a much more complex regular expression.

Our code currently looks like this:

df = (

pd

.read_csv(url,

usecols=['data', 'alertDate', 'category', 'category_desc'],

parse_dates=['alertDate'],

index_col = 'alertDate')

.assign(data = pd.col('data').str.split(','))

.explode('data')

.assign(data = pd.col('data').str.replace(r'\d+', '', regex=True).str.strip())

)Two things remain to be done: First, we need to sort the data frame by the index. That's because we'll be doing some time-related actions using the index, which contains datetime values, and that requires we first run sort_index. (Otherwise, we'll get an error telling us that the index needs to be sorted.)

Second, we need to perform the translation. That's easily done with the replace method. (Don't confuse replace with str.replace – they're similar, but not the same.) We give replace a dictionary, and it uses that dict as a "from" and "to" translation table.

But wait – we have two dictionaries that we want to use, one for locations and one for alert text. How can we use both?

One option is to run replace twice, one with each dict. But modern versions of Python allow us to merge two dicts into a single one with the | (vertical bar) operator. Given that there isn't any overlap between the keys in these two dicts, I thought it would be fine to perform that.

So here's how I create my data frame, with things translated:

df = (

pd

.read_csv(url,

usecols=['data', 'alertDate', 'category', 'category_desc'],

parse_dates=['alertDate'],

index_col = 'alertDate')

.assign(data = pd.col('data').str.split(','))

.explode('data')

.assign(data = pd.col('data').str.replace(r'\d+', '', regex=True).str.strip())

.sort_index()

.replace(bw161_translations.alerts | bw161_translations.locations)

)The result? A data frame with 352,469 rows and 3 columns, plus the datetime index.

Wait – 352,469 rows? Have that many missiles really been fired at Israel? No, not really:

- The data set reflects all security notifications from Jul 24, 2014 through (as of this writing) Mar 12, 2026.

- It reflects notifications, not missiles. So one missile headed for the center of Israel, with notifications to 30 locations, would count as 30 rows in our data frame.

- It includes notifications for all events, including earthquakes and terrorist incidents.

- It includes the "all clear" notifications, telling people that they can leave shelters and safe rooms.

So while the number is large, it's not quite as shockingly large as you might at first expect.

Starting on February 28th, how many alerts for rocket (category of 1) have there been in each location in Israel? Which locations have had the greatest number of alerts?

To get this information, we first need to filter our data frame – both by category (not category_id as I mistakenly said in the original question) and by date.

We can do both with loc . To filter by category, we can use pd.col, specifying the column name and indicating that it must be == to 1. Here, I'm using Pandas 3 syntax; in previous versions, we would have needed to use a lambda expression:

(

df

.loc[pd.col('category') == 1]

)Next, I keep only those rows from February 28th and onward. Because the index contains datetime values, and because they're sorted, I can use a slice in loc, asking for all values from 2026-02-28 and onward:

(

df

.loc[pd.col('category') == 1]

.loc['2026-02-28':]

)Now I can perform the calculation: I wanted to know, for each location (i.e., the data column), how many times did it appear? One way would be to use groupby, passing the count aggregation method. But I often prefer to use value_counts.

To use it here, I modified my second invocation of loc to use two arguments – not just a row selector (the slice), but also the column selector ('data). Then I ran value_counts on that series. The index for this series were the location names, and the values (numbers) were the number of times that a rocket alert was sounded there. I then used head(50) to find the 50 places with the most alerts:

(

df

.loc[pd.col('category') == 1]

.loc['2026-02-28':, 'data']

.value_counts()

.head(50)

)The result:

data count

Ramat Gan - West 92

Tel Aviv - East 92

Tzafriya 91

Ariel Sharon Park 91

Givat Shmuel 91

Mishmar HaShiva 90

Beit Dagan 90

Kiryat Ono 90

Ramat Gan - East 90

Holon 90

Or Yehuda 90

Azor 90

Givatayim 90

Beit Arif 89

Hamd 89

Tirat Yehuda 89

Yagel 89

Airport City 89

Ganot 89

Ma'as 89

Mikve Israel 89

Savyon 89

Petah Tikva 89

Bnei Brak 89

Gat Rimon 89

Bnei Atarot 89

Nahalim 89

Gane Tikva 89

Magshimim 89

Mazor 89

Bareqet 88

Kfar Chabad 88

Rishon LeZion - East 88

Be'erot Yitzhak 88

Tel Aviv - South City and Jaffa 88

Yehud Monosson 88

Rantiyya 88

Nofech 88

Rishon LeZion - West 88

Shoham 87

Olam Hesed Farm 87

Ofrim 87

Givat Kaf-Het 87

Tel Aviv - City Center 87

Tel Aviv - Beyond the Yarkon 87

Bat Yam 87

Nahshonim 87

Hevel Modi'in Shoham Industrial Zone 87

Ahiezer 87

Avihai Farm 86Some cities, such as Tel Aviv, are divided into regions, and have more than one location name. Others are small enough to have ea single region.

Remember that just because there's an alarm, it doesn't mean that a missile hit there! The alerts are based on the trajectory of the missiles, in order to get anyone in its rough targeted area to safety before it hits or (more likely) before shrapnel from the Arrow or Iron Dome reaches the ground.

Which locations had the largest number of hits? We can see that from the top of the list, but we can also grab all of those locations by calculating the maximum value, and keeping all rows with that:

(

df

.loc[pd.col('category') == 1]

.loc['2026-02-28':, 'data']

.value_counts()

.loc[lambda s_: s_ == s_.max()]

)

Here, I used loc, much as I did before – but I used lambda, rather than pd.col, because I'm working with a series, rather than a data frame – and pd.col only works with data frames. We thus use lambda to compare each value in the series with the maximum value in the series:

data count

Ramat Gan - West 92

Tel Aviv - East 92Sure enough, it's the top two rows from our previous solution, the eastern portion of Tel Aviv and the western portion of Ramat Gan (a city right next to Tel Aviv).